Product Analytics on Top of Snowflake: Best Platforms in 2026

Explore tools that treat your warehouse as the event source. A four-category vendor map, and a POC checklist for warehouse-native PA on Snowflake.

The default architecture for product analytics has changed. At most SaaS companies past Series A, events now land in Snowflake before they land anywhere else. The instrumentation pipe runs through Segment, Snowplow, RudderStack, or a custom collector. The events flow into a few warehouse tables. The data team models them in dbt. And then someone — a PM, a Head of Data, a CTO — has to answer the next question: what tool do we put in front of these events?

That’s the question this article answers. Not whether the warehouse should be the event source. That decision has been made. The question is which of the tools on the market actually treat the warehouse that way, which ones merely connect to it, and what you give up versus what you gain when you commit to the warehouse-native path.

The reader of this article has already reasoned past the obvious distinctions. They know that some tools extract data and some query it live. They know that funnels, retention, and path analysis are specific primitives, not generic dashboards. They know that “real-time” can mean very different things in a BI tool. They want a vendor map and a clear-eyed list of trade-offs, not a sales pitch.

We try to give them both.

TL;DR

Product analytics is splitting into two camps. One treats events as a dataset that lives inside the analytics tool (Mixpanel, Amplitude classic, Heap). The other treats the warehouse as the source of truth and queries it live (Mitzu, Kubit, NetSpring; general-purpose BI like Astrato, Sigma, Hex, Looker; or compose-your-own dbt + Cube stacks).

For teams investing in their warehouse anyway, the warehouse-native camp reduces total cost and increases ownership of taxonomy and definitions. For teams without analytics-engineering capacity to model events in dbt, the traditional camp is more pragmatic — the PA primitives ship out of the box, and that matters when nobody has time to build them.

The four-category vendor map below sorts the tools that compete in this space. Astrato fits cleanly into category three: warehouse-native BI that serves the product analytics use case when your funnels are already SQL-modeled. It is not a Mixpanel replacement on its own.

The two camps

Product analytics tools fall into two architectural patterns. The pattern matters more than the feature checklist, because it determines where your event data lives, who controls the definitions, and what you pay for the same query twice.

Camp 1 — Traditional product analytics

In the traditional pattern, events live inside the analytics tool. You install an SDK in your app, the SDK ships events to the vendor, the vendor stores them in their own event store, and their query engine runs funnels, retention curves, and path analyses on that store. Mixpanel, Amplitude (in classic mode), and Heap are the canonical examples.

The strengths are real. Funnels work out of the box — point and click to define a sequence of events, and the chart appears. Session stitching is automatic, with the SDK handling identity resolution across logged-out and logged-in states. The event taxonomy auto-discovers new event types as they appear in the stream. Behavioral cohort discovery — “users who did X within 7 days of Y” — is a UI primitive, not a SQL exercise. The PM-facing UX is the product, polished by years of iteration.

The trades are also real. The taxonomy lives in the vendor’s tool, which means renaming an event or merging two property values is a vendor action, not a warehouse action. The pricing model — usually monthly tracked users (MTUs) or events ingested — scales with your traffic, not with the work you actually do. If your product gets popular, the bill grows whether the analytics get more useful or not. And the event data is in two places: in the vendor’s store, where the PM queries it, and in your warehouse, where the data team queries it. The two stores drift.

Mixpanel’s own engineering team has been explicit about why their architecture exists. In a 2025 post on Mixpanel’s engineering blog, they argue that Mixpanel’s purpose-built event store delivers funnels up to 7x faster than the same query on Snowflake — by sharding events for in-memory processing and avoiding the fact-on-fact joins and full table scans that dominate warehouse-native funnel runtime. That speed is the trade-off you’re paying for.

Camp 2 — Warehouse-native product analytics

In the warehouse-native pattern, events live in your Snowflake account. You collect them with whatever upstream layer you prefer (Segment, Snowplow, RudderStack, custom). They land in a few warehouse tables. Your data team models them in dbt — defining what a session is, what a funnel step is, what an active user is, what a converted user is. The product analytics tool queries those models directly. No copy, no sync, no second store.

The strengths are also real, but different. The warehouse is the single source of truth — the same active_users definition that finance uses for ARR calculations is the one a PM sees in the funnel. There’s no double payment for compute: you’ve already paid Snowflake for the storage and the query engine, and the analytics tool is just emitting SQL against it. Governance is inherited from the warehouse — Snowflake’s row-level security, column masks, and roles flow through to whatever tool is querying. And event data joins naturally with everything else in the warehouse: revenue, support tickets, customer metadata, billing, churn signals. The PM looking at a funnel can break it down by plan tier without an integration project.

The trades are honest and explicit. The PA primitives don’t ship for free — someone has to model them in dbt or in views. Sessions don’t stitch themselves; you write the SQL or you adopt a tool that writes it for you. The PM-facing UX is whatever the tool you put on top provides, which in most cases is a BI surface that wasn’t built specifically for behavioral exploration.

The honest claim is this: for teams investing in their warehouse anyway, the warehouse-native pattern collapses the total cost and centralizes the taxonomy. For teams without analytics-engineering capacity, the traditional pattern delivers value faster, with a worse architectural footprint over time.

What you give up by going warehouse-native

This is the section the title doesn’t promise but the article needs. The temptation is to make warehouse-native sound universally better. It isn’t. There are five concrete things you give up.

Naming these gives you the honest baseline against which to evaluate the warehouse-native upside.

What you gain by going warehouse-native

Five things, equally explicit.

A single source of truth. The same definition of “active user” used in the CFO’s MRR calculation is the one used in the PM’s retention chart. When the definition changes, it changes once.

No double payment for compute. You’ve paid Snowflake for the storage and the query. You don’t also pay Mixpanel for storage and a parallel query engine on the same data.

Warehouse-grade governance. Row-level security, column masks, role-based access, and audit logs are enforced at the warehouse layer. The product analytics tool inherits them automatically. There’s no second permissions model to maintain.

Ownership of the taxonomy. Renaming an event, merging two property values, deprecating a deprecated field — these are PRs against your dbt repo, not support tickets to a vendor.

Integration with non-event warehouse data. Funnels broken down by plan tier, retention curves segmented by support-ticket volume, paths for customers above a revenue threshold — these joins are trivial when the events live next to the rest of your business data. In the traditional pattern, they require reverse ETL or a parallel pipeline.

For teams already invested in dbt and Snowflake, these benefits compound. For teams who haven’t made that investment, they’re abstract — and the operational cost of building the warehouse-native foundation is real.

The product analytics primitives, mapped to the warehouse-native pattern

Five primitives define the product analytics category. Each one is a specific kind of question you ask of event data. In the traditional pattern, they’re UI features. In the warehouse-native pattern, they’re SQL patterns over models you build (or that a category-2 tool builds for you).

Funnels

Of the users who started step 1, how many reached step N within a time window? In SQL, this is a series of self-joins against an events table, filtered by event_name and ordered by timestamp, with conversion windows expressed as time difference predicates. In dbt, it’s typically a model that produces one row per user per funnel attempt, with columns for which step they reached. A category-2 tool (Mitzu, Kubit) generates the SQL for you from a UI. A category-3 tool (Astrato, Sigma, Hex, Looker) shows the chart of a model the data team has already built.

Retention

Of the users active in week 0, what percentage are active in week 1, week 2, week N? The cohort table is a pivot: rows are cohorts (defined by some entry event), columns are subsequent time periods, cells are retention rates. The SQL is a join between the cohort definition and the activity events, aggregated. dbt models for retention are common — they’re the kind of thing you build once per definition of “active” and reuse.

Path analysis

What sequence of events did users take between A and B? The expensive primitive. In SQL, you compute event sequences per user using window functions (LAG, LEAD, or array aggregation), then aggregate sequences by frequency. The output is a Sankey diagram or a tree. Mitzu auto-generates this; in a category-3 BI tool, you’re either modeling it in dbt or rendering a pre-computed sequence table.

Segmentation

Filter users by attribute combinations and compare metrics across segments. This is the simplest primitive — it’s WHERE clauses and GROUP BY. Every tool handles segmentation, but the warehouse-native advantage is that segments can join across event data and warehouse-resident customer data without an integration step.

Cohorting

Closely related to retention but more general — defining a group of users by behavior and tracking them forward. “Users who completed onboarding in Q1, what is their net-revenue retention through Q3?” In the warehouse-native pattern, the cohort definition is a model (or a view) that produces a list of user_ids, and downstream queries join against it. The cohort lives in the warehouse and is reusable across tools.

The pattern across all five: the primitives don’t go away in the warehouse-native model — they move from being UI features inside an analytics tool to being SQL models inside your warehouse. Tools in category 2 abstract that SQL behind a UI. Tools in category 3 render dashboards on top of the SQL the data team writes.

The four kinds of tools you can put in front of a Snowflake event warehouse

These are the categories that compete for the source query. Each has a sweet spot. Each has a worst-case fit.

Category 1 — Traditional PA with warehouse sync

Examples: Mixpanel (Warehouse Connectors), Amplitude (classic mode with bidirectional Snowflake integration), Heap.

What they actually do. These platforms have added Snowflake integration to a traditional product analytics architecture. The architecture is still Mixpanel’s, Amplitude’s, or Heap’s — events live in their store, their engine runs the queries, the UI is theirs. The Snowflake integration is one of two patterns: events get exported from the PA tool into Snowflake (so the data team can query them downstream), or warehouse data gets synced into the PA tool (so PMs can join warehouse attributes onto event data).

Mixpanel’s Warehouse Connectors sync data from the warehouse into Mixpanel using warehouse-specific change tracking — Snowflake Streams for change capture, with the events landing in Mixpanel’s own store for querying. This is the opposite direction of warehouse-native: the warehouse is a source, Mixpanel is the destination.

Amplitude’s Snowflake-native mode is genuinely different. Released in GA in mid-2024 and built on Snowpark Container Services, it lets Amplitude run as a native app inside the customer’s Snowflake account — translating Amplitude’s chart definitions into SQL that executes against warehouse-resident data with zero copy. This is closer to true warehouse-native than Mixpanel’s pattern, with the caveat that the experience and pricing model still anchor to Amplitude.

Sweet spot. Teams already deeply invested in Mixpanel or Amplitude who want warehouse access without a migration. Amplitude’s Snowflake-native mode is also a real option for teams who want the Amplitude UX without sending event data outside their Snowflake account — a meaningful compliance win for regulated industries.

Worst-case fit. Teams hoping to escape per-MTU pricing by syncing to Snowflake. The warehouse sync doesn’t change the underlying pricing model; you’re still paying the PA vendor for what they ingest, query, or seat-count.

Category 2 — Purpose-built warehouse-native PA

Examples: Mitzu, Kubit, NetSpring.

What they actually do. These tools were built from day one to query the warehouse directly. The user interface is product-analytics-shaped — funnels, retention curves, path diagrams, segmentation — and the engine emits SQL against your Snowflake tables. There’s no second event store. The taxonomy lives in your warehouse (often defined by dbt models the tool reads), and the tool generates SQL that joins, filters, and aggregates against it.

Mitzu connects directly to Snowflake (and BigQuery, Databricks, Redshift, ClickHouse, Postgres, Athena, Trino), reads dbt metric definitions to inherit the semantic layer, and auto-generates SQL for funnels, retention, segmentation, and paths. The tool exposes the generated SQL so a data engineer can audit or optimize any query. Pricing is seat-based rather than event-based — a structural advantage for teams whose event volume scales independently of their team size. Mitzu has published a customer case — Khatabook, an Indian fintech with around 4 billion events per month — describing a 90% reduction in product-analytics spend by moving from Mixpanel to a Mitzu-on-Snowflake stack.

Kubit and NetSpring sit in similar architectural territory, with their own UI choices and pricing models.

Sweet spot. Teams committed to warehouse-native who want PA primitives out of the box. The data team builds and maintains the dbt models; PMs explore funnels, retention, and cohorts through a familiar UI. This is the most direct answer to the source query “which tools let me query funnels in real time on Snowflake-resident events.”

Worst-case fit. Teams whose analytics needs extend far beyond product analytics. These tools are good at the PA primitives and less suited to general dashboarding, finance reporting, or external embedded analytics for customers.

Category 3 — General-purpose warehouse-native BI used for product analytics

Examples: Astrato, Sigma, Hex, Looker.

What they actually do. These are warehouse-native business intelligence tools that can serve the product analytics use case when funnels, retention, and cohorts are SQL-modeled in advance. They don’t ship pre-built funnel components; they show whatever the data team has built in dbt, in Snowflake views, or in their semantic layer. The trade-off versus category 2 is breadth — these tools handle PA and finance and exec reporting and customer-facing analytics, where the category-2 tools are PA-focused.

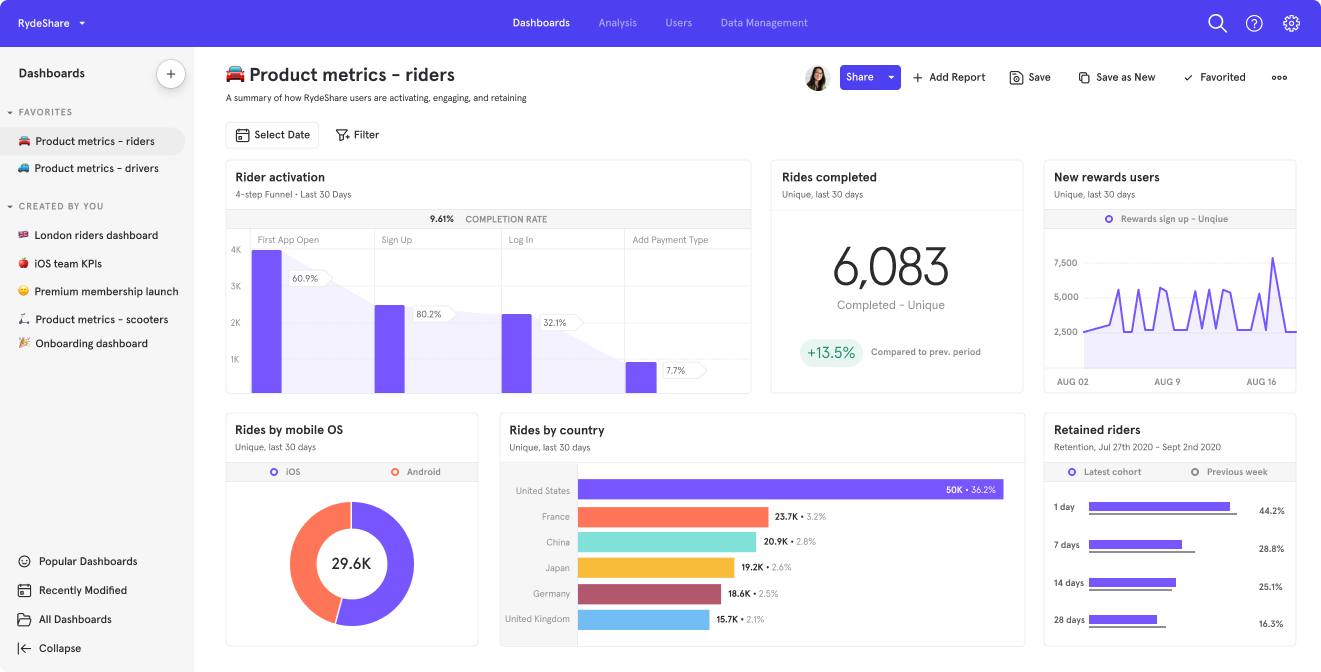

Astrato is a warehouse-native BI platform with live-query architecture against Snowflake (and BigQuery, Databricks, Redshift, ClickHouse, PostgreSQL, Supabase, Dremio, MotherDuck). For product analytics specifically: if your data team has modeled funnels in dbt or as Snowflake views, Astrato renders them as dashboards that respect the warehouse’s row-level security and roles automatically. The semantic layer can consume Snowflake’s native semantic models when present, which means metric definitions defined in the warehouse flow through without duplication. PMs explore curated datasets and pre-defined metrics — the Level-2-and-3 guided self-service pattern covered in our self-service BI on Snowflake article — without filing a ticket every time they want to slice differently.

Astrato adds two things less common in this category. First, multi-LLM AI grounded in the semantic model — Snowflake Cortex (Meta, Claude, DeepSeek, Mistral), Google Gemini, OpenAI, or BYO LLM — for natural-language queries against modeled events. Second, writeback to Snowflake under governed SQL: when a PM identifies a churn cohort and wants to flag those users for downstream intervention, a write to a flagged_users table happens in-place. That closes the loop from insight to action in a way neither Mixpanel nor pure read-only BI tools can.

Sigma sits in adjacent territory with a spreadsheet-fluent UI and input tables for basic writeback. Hex is notebook-first and serves analyst-led PA exploration well; its app-publishing model is one-way, which limits PM self-service. Looker, with LookML, is strong on semantic modeling and weaker on the warehouse-native AI surface for Snowflake specifically.

Sweet spot. Teams committed to warehouse-native who already have (or are building) dbt event models, and whose analytics ambitions extend beyond pure product analytics — finance dashboards, embedded customer analytics, operational workflows. The flexibility comes at the cost of more upfront modeling than category 2 requires.

Worst-case fit. Teams who want product analytics primitives ready to go and don’t want to invest in the dbt foundation first. These tools are honest about that — they won’t pretend to be Mixpanel.

Category 4 — Compose your own

Examples: dbt + Cube + custom UI; dbt + Streamlit; dbt + a notebook tool.

What they actually do. Engineering builds the analytics surface. dbt models the events, Cube (or a similar headless semantic layer) exposes a query API, and the UI is whatever your team writes — React components, a Streamlit app, a Hex notebook embedded in an internal tool. Maximum flexibility, maximum engineering investment.

Sweet spot. Teams with strong engineering capacity, unique requirements that off-the-shelf tools don’t meet, or specific UX commitments to customers that a vendor product can’t satisfy.

Worst-case fit. Anyone who’s not willing to maintain it. The hidden cost isn’t the build; it’s the next three years of support.

Vendor map

A POC checklist for warehouse-native product analytics

Before you commit to a category-2 or category-3 tool, sit with your data team and run these tests on real Snowflake event data. An afternoon is enough.

1. Time-to-first-funnel from a cold start. Pick a real funnel the team cares about — say, signup to first paid action. Time how long it takes to get a working chart from each tool. In category 2, this should be under an hour. In category 3, it depends on whether your dbt models are in shape; if not, the time-to-funnel includes the modeling work. If the modeling work is the gap, that tells you something honest about your readiness.

2. Funnel runtime against your real volume. Run the same funnel query at production scale. Mixpanel’s published claim of 7x speed advantage over warehouse-native funnel queries is real for the workloads they benchmarked. For most SaaS event volumes (hundreds of millions to a few billion events per month), warehouse-native runtimes are workable but noticeably slower than purpose-built engines on uncached cold queries. Decide whether the latency difference matters at your scale.

3. Session stitching parity. Pick a session metric (median session length, sessions-per-user) and compute it both ways — the warehouse-native model and your existing PA tool. Reconcile the numbers. The gap, if there is one, is the cost of sessions-as-SQL versus sessions-as-SDK. Sometimes the gap is acceptable; sometimes the work to close it is the project.

4. Behavioral cohort expressiveness. Pick the messiest behavioral cohort the team has ever needed — “users who upgraded to Pro within 14 days of a support ticket about feature X, broken down by acquisition channel.” See how each tool handles it. Category-2 tools will have a UI for most of this; the messy edges fall back to SQL. Category-3 tools will require modeling the cohort first.

5. Governance inheritance. Apply a Snowflake row-level security policy to the events table. Verify that the tool respects it for end users. This is where some tools — particularly older BI platforms with extract-based architectures — quietly fail. The warehouse-native tools should inherit the policy automatically; if they don’t, the tool isn’t really warehouse-native, regardless of marketing.

6. Operational closure. If the tool is supposed to enable action — flagging churn cohorts, triggering campaigns, updating segments — test that path. Read-only PA is one product; PA that closes the loop to operational systems is a different product. Make sure the tool you’re picking is the one you actually need.

If a tool passes all six, the architecture story is real. If it passes four out of six, you’re choosing which compromises you can live with.

The decision: matching tool category to your team

The honest rubric is shorter than most buyer’s guides admit.

The clearest example: choosing Astrato (or Sigma, or Looker) because you want a warehouse-native answer to Mixpanel, and then discovering that your data team doesn’t have time to build the funnel models. The right answer for that team is Mitzu, or staying on Mixpanel for another year while the dbt foundation catches up. Either is more honest than buying a category-3 tool and being disappointed.

Where Astrato genuinely fits

Astrato is a warehouse-native BI platform. Its product analytics story is honest: when your event models live in dbt or as Snowflake views, Astrato renders them as live dashboards, with PM self-service exploration, governance inherited from Snowflake, multi-LLM AI grounded in the semantic model, and writeback for operational follow-through.

BookNook, an education platform delivering virtual tutoring to K-8 students, illustrates the pattern in adjacent territory — not classic SaaS funnels, but persona-based engagement analytics on top of dbt-modeled data in Snowflake. After implementing Astrato, BookNook reported 155% growth in active users, a 54.9% daily return rate, and 8.5x growth in dashboard views. Lorrae Famiglietti, Director of Product Strategy, described the architectural shift this way:

The pattern translates directly to the SaaS product analytics use case: model the events thoughtfully in the warehouse, surface them through a flexible BI layer, drive engagement through dashboards that meet users where they work.

What Astrato is not, said plainly: not a Mixpanel replacement on its own. There are no pre-built funnel components, no automatic session stitching, no behavioral cohort discovery in the Mixpanel sense. If your data team isn’t modeling events in dbt and you want product analytics primitives ready to go, look at Mitzu or Kubit, or stay on Mixpanel until the warehouse foundation is in place. That answer earns more trust than the alternative.

If category 3 sounds like your team — dbt models in place, analytics ambitions beyond pure PA, a need for live-query and writeback on Snowflake — book a demo and we'll show you what your funnels look like running directly against your warehouse. If a different category fits better, we'll say so.

Frequently asked questions

What is warehouse-native product analytics?

Warehouse-native product analytics is the architectural pattern where event data lives in a cloud data warehouse like Snowflake, models live in dbt or as warehouse views, and the analytics tool queries the warehouse directly rather than maintaining a separate event store. It contrasts with traditional product analytics (Mixpanel, Amplitude classic, Heap), where events live in the vendor’s own store. Warehouse-native gives teams a single source of truth and warehouse-grade governance, at the cost of building out-of-the-box PA primitives (funnels, retention, sessions) in SQL or relying on tools that generate that SQL.

Can Snowflake replace Mixpanel?

On its own, no. Snowflake stores and queries the events; it doesn’t ship a product analytics UI. The closest Snowflake-native answer is Snowsight plus Cortex Analyst, which is a SQL surface and a natural-language query tool, not a funnel builder. To replace Mixpanel with a warehouse-native stack, you typically pair Snowflake with an event collector (Segment, Snowplow, RudderStack), a modeling layer (dbt), and a tool that provides the PA primitives — either category 2 (Mitzu, Kubit, NetSpring) or category 3 (Astrato, Sigma, Hex, Looker) depending on how much you want to model yourself.

What’s the difference between Mixpanel’s Warehouse Connectors and Amplitude’s Snowflake-native mode?

Mixpanel’s Warehouse Connectors sync data from Snowflake into Mixpanel’s own event store, where Mixpanel’s purpose-built engine runs the queries. The architecture remains Mixpanel-centric. Amplitude’s Snowflake-native mode is structurally different: it runs as a native app inside the customer’s Snowflake account using Snowpark Container Services, translating Amplitude chart definitions into SQL that executes against warehouse-resident data with zero copy. Mixpanel’s pattern is hybrid; Amplitude’s Snowflake-native is closer to true warehouse-native, while still preserving Amplitude’s UI and pricing model.

Do I need dbt to do warehouse-native product analytics?

You don’t strictly need dbt — you can model events in plain Snowflake views, in a headless semantic layer like Cube, or in the BI tool’s own modeling layer. But in practice, most warehouse-native teams use dbt because it gives version control, code review, and lineage for metric definitions. Tools in category 2 (Mitzu) and category 3 (Looker, Astrato to a degree) can read dbt models directly, which means the event models you build for analytics also feed the rest of your analytics stack.

When does warehouse-native product analytics not make sense?

Three cases. First, if your data team can’t or won’t invest in modeling events — the warehouse-native pattern requires upfront work that doesn’t pay off without that investment. Second, if your event volume is small enough that Mixpanel’s pricing isn’t painful and your PMs need a polished out-of-the-box UI. Third, if your product analytics needs are highly behavioral (deep cohort exploration, ad-hoc behavioral segmentation) and your team’s PMs are not SQL-fluent. In any of these cases, traditional PA — possibly with a Snowflake sync for downstream warehouse work — is the more pragmatic answer until the constraints change.

Ready to experience next-gen analytics?

See how Astrato runs natively in your warehouse.